Before the Headline

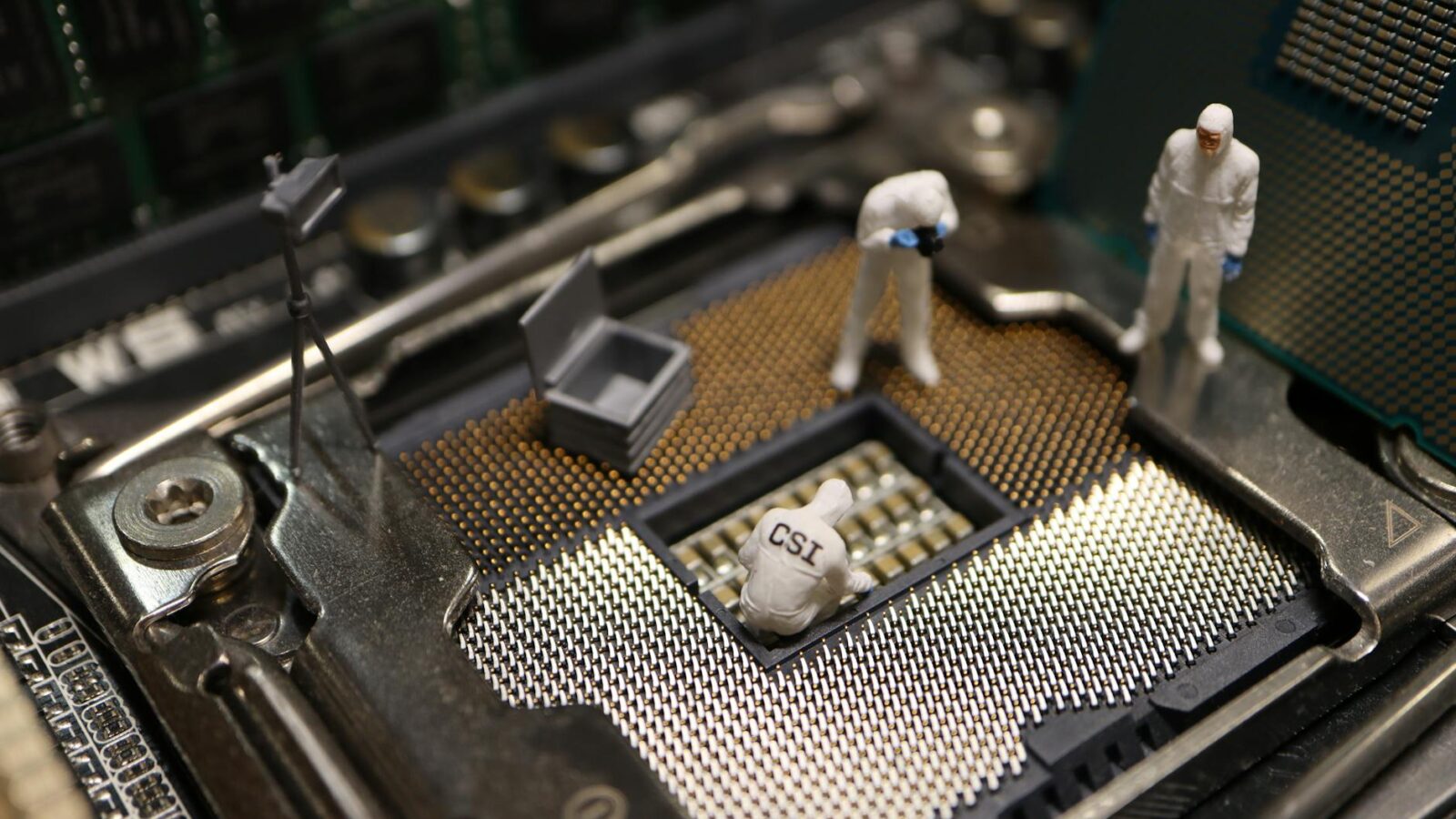

The integration of artificial intelligence into everyday life has raised questions that linger like a shadow behind innovation. From self-driving cars to medical diagnostics, the pace of technological evolution has consistently outstripped the development of corresponding legal frameworks, leaving a gaping chasm in accountability. As such, the investigation into the alleged involvement of ChatGPT in the tragic murders of two University of South Florida students is not merely a sensational headline; it symbolizes a pivotal moment in the ongoing discourse surrounding ethical technology use.

Florida’s Attorney General has launched an investigation into whether ChatGPT, the AI-driven language model, played a role in the circumstances leading up to the deaths of these students. This inquiry comes on the heels of broader discussions about the intersection of technology and crime, particularly in scenarios involving violent acts. The inquiry’s implications extend far beyond this tragic incident, potentially signaling a shift towards legislative change regarding AI’s legal responsibilities.

While mainstream narratives might focus on the dramatic potential of AI in criminal contexts, the more profound analysis lies in the legal accountability that must accompany such technologies. The murky waters of AI’s culpability in violent crimes could lead us to a future where misuse is met with stringent regulations akin to those found in the automotive or pharmaceutical industries. In essence, as we grapple with the ramifications of this inquiry, the pressing need for regulatory frameworks becomes glaringly apparent.

What We Know

- Florida’s Attorney General is investigating ChatGPT’s alleged involvement in the murders of two USF students.

- The investigation signals an increasing legal scrutiny of AI technologies in the context of violent crime.

- Legal precedents set by this case could influence future legislation regarding AI accountability.

What We Don’t Know Yet

- What specific evidence will be presented linking ChatGPT to the murders?

- How will this investigation influence public and corporate perceptions of AI technology?

- What legislative actions could emerge from this inquiry, and how will they be structured?

Between the Lines

What the mainstream reports often overlook is the broader cultural and ethical debate simmering beneath the surface of this investigation. The dialogue surrounding AI’s role in society has largely skirted the issue of responsibility; the Florida AG’s probe may force a reckoning on that front. The legal implications could evolve from this singular case, prompting an examination of how culpability is assigned when AI is involved in criminal acts.

Furthermore, there lies a dissonance between public excitement for AI advancements and the fear of their potential misuse. While the public marvels at AI’s capabilities, the narrative of fear stemming from incidents like these complicates the conversation. The silence from tech giants in responding to these concerns indicates a hesitance to engage with the ethical responsibilities tied to their innovations.

What This Means for You

For investors: Understanding the legal landscape surrounding AI could inform future investment in technology companies. For commuters: As autonomous technology develops, awareness of liability in accidents is paramount. For technologists: The outcome of this investigation may necessitate a reevaluation of AI design and ethical implications, influencing future projects.

After the Headline

As this investigation unfolds, it will be crucial to monitor public sentiment regarding AI technologies, as growing scrutiny may lead to heightened demands for regulatory measures. Key dates to watch include legislative sessions in the coming year, where discussions around AI accountability are likely to gain traction, particularly with predictions suggesting that by the end of Q2 2025, at least three U.S. states will introduce related legislation.

Indicators to track will include any emerging bills that specifically address AI’s legal responsibilities in violent crime cases. As the conversation evolves, we could see a measurable increase in legislative discussions surrounding AI ethics and responsibility, reshaping how society perceives and interacts with these technologies.

TIMES Take: This investigation may serve as a catalyst for rethinking AI’s place in the legal framework, as the consequences of its misuse demand urgent attention and form the groundwork for future regulations.